SUMMARY

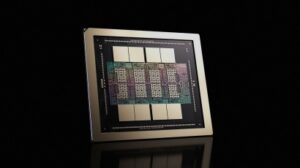

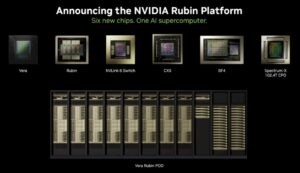

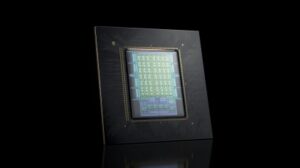

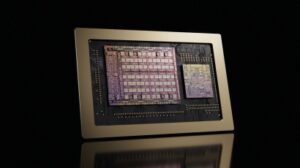

Vera Rubin is Nvidia’s first extreme-codesigned platform and will comprise six AI chips, alongside various networking technologies and system software, all working together as a single computing unit. The platform includes Rubin GPUs, Vera CPUs, NVLink 6 networking, Spectrum-X Ethernet Photonics, ConnectX-9 networking cards and BlueField-4 data processing units. This design helps reduce bottlenecks and improve performance for large AI workloads. The Vera CPU is designed to handle data movement and AI agent processing tasks.

Rubin is designed to support large-scale artificial intelligence workloads and is aimed at data centres, cloud providers and enterprises building advanced AI systems. The platform is built to lower the cost of AI computing and support faster training and deployment of AI models. Rubin computing units are already in full production, with products and services powered by these units expected to launch in the second half of 2026.

Las Vegas

One minute into Jensen Huang’s CES keynote, the crowd suddenly saw beyond flashy gadgets. He wasn’t unveiling a gizmo or a chatbot. He was revealing what many now agree is the first real AI operating system for the world: the Vera Rubin AI computing platform. It’s a pivot away from the old AI boom, the one driven by viral apps and oversized language models, toward something deeper, more structural, and globally strategic.

For founders and investors, this isn’t Silicon Valley hype. It’s the moment AI stepped onto the world’s economic backbone.

How the Idea Took Shape

Two decades ago, Nvidia was a GPU maker. By 2026, it had matured into what some call the central nervous system of industrial AI. The Vera Rubin platform represents the latest chapter in a long internal evolution, beginning with Nvidia’s early Blackwell GPUs that powered generative models and now graduating to a full rack-scale AI system built for real-world workloads.

According to multiple insiders, the project started quietly in 2022, after Nvidia realised that simply making faster chips wasn’t enough. The problem wasn’t raw power; it was how to tie together processing, networking, security, and data handling into one cohesive, deployable stack that businesses could actually rely on.

Initial prototypes were essentially tightly coupled supercomputers, modular but unwieldy. Teams ran into bottlenecks: data traffic between components, cost overruns from under-utilised silicon, and early firmware bugs that made distributed training unstable.

That’s where the breakthrough happened. Engineers pivoted from a component-first mentality to a systems-first architecture. They pulled lessons from hyperscale cloud operators and military-grade secure computing, embedding third-generation confidential computing and trusted hardware at the rack level. The result wasn’t just more compute, it was secure, efficient, predictable AI execution at scale.

Talks with early customers in aerospace, pharma and automotive helped shape this vision. One CTO from a global manufacturing firm told me that without a unified AI “OS,” gains from digital twins and adaptive automation were locked behind cost and complexity. Vera Rubin came from solving exactly that real business problem.

Inside Vera Rubin: What Makes It Different

Put simply, Vera Rubin marries five pillars:

1. Integrated Hardware Stack

It combines CPU, GPU, networking and security into a single coherent unit. No piecing together separate boxes. This reduces latency and cuts deployment risk.

2. Secure by Design

With confidential computing built in, companies can run AI on sensitive data without exposing it to administrators or cloud providers. That’s a big deal for finance, healthcare and national infrastructure.

3. Cost Efficiency at Scale

Early benchmarks suggest training “mixture of experts” models can use one-quarter the GPUs and one-seventh the token cost compared with older setups. That directly translates to cheaper AI insights for business teams.

4. Industrial-Grade Reliability

Unlike cloud lab demos, this platform is built for 24/7 operations in production environments.

5. An AI Operating Fabric

Instead of GPUs just running model training, companies now have a unified runtime that can support everything from simulation software to autonomous systems.

Where Vera Rubin Is Being Applied Now

Nvidia isn’t selling this to consumers. It’s targeting enterprise and industrial AI, the sectors where predictability matters:

Manufacturing and Supply Chains

Adaptive manufacturing sites that redesign themselves with real-time data. Early pilot sites are live in Germany and Japan, promising to drive down waste and accelerate product cycles.

Autonomous Systems

From robotaxis to logistics robots, the platform’s low-latency compute is key to physical AI — systems that sense, reason, and act in the real world.

Drug Discovery

Teams can now model complex biological systems without oversized cloud bills.

Financial Risk and Security

Secure by design, AI opens doors for regulated industries to use advanced models without leaking sensitive data.

Business Models and Competitive Dynamics

This innovation rewires the economics of AI.

Infrastructure as a Service Moves In-House

Companies that once relied solely on public cloud now have a credible on-premise alternative that’s competitively priced and yet secure.

Edge Meets Cloud

By decentralising inference and training closer to operations, latency drops and privacy rises.

AI as Industrial Software

The rise of AI OS shifts value from standalone apps to platforms that orchestrate entire workflows. Enterprises may buy AI capability, not AI features.

Competitors like AMD and Intel can respond with their own integrated stacks, but Nvidia’s time lead and developer ecosystem give it an edge. Emerging open-source initiatives will try to catch up on cost, but few have the hardware ecosystem and enterprise trust Nvidia has built.

Challenges and Regulation

Adoption won’t be frictionless. High upfront investment, skills gaps, and regulatory scrutiny around data sovereignty will shape uptake. Governments in the EU, US and Asia are tightening rules on sensitive data and AI transparency. A platform like this must prove not just performance but compliance. That’s where confidential computing and built-in governance modules will count.

Why This Matters for 2026 and Beyond

Vera Rubin marks a shift in AI’s lifecycle. We’re moving past the age of cool demos and toward a generation where AI becomes core infrastructure for industry, mobility and physical systems. It’s the foundation that will host autonomous factories, safer robotics, smarter cities and mission-critical AI workflows that have real economic value.

For builders and founders, this is a moment to ask new questions: are you creating a niche application on top of these platforms or building something that extends the underlying AI fabric?

In this new era, companies that think in systems, not just models, will lead.